AgentGuard: AI Visibility & Governance

Continuous discovery, policy-based governance, and real-time enforcement for AI agents and embedded AI features across enterprise SaaS environments.

AppOmni

Executive Summary

Overview

- Product: AgentGuard: AI Visibility & Governance

- Company: AppOmni

- Role: Lead Product Designer

- Timeline: 2 months

Key Contributions

- Defined the four-layer AI inventory model (Apps, Features, Agents, Models) that anchors the product

- Designed the end-to-end Discover → Configure → Enforce workflow

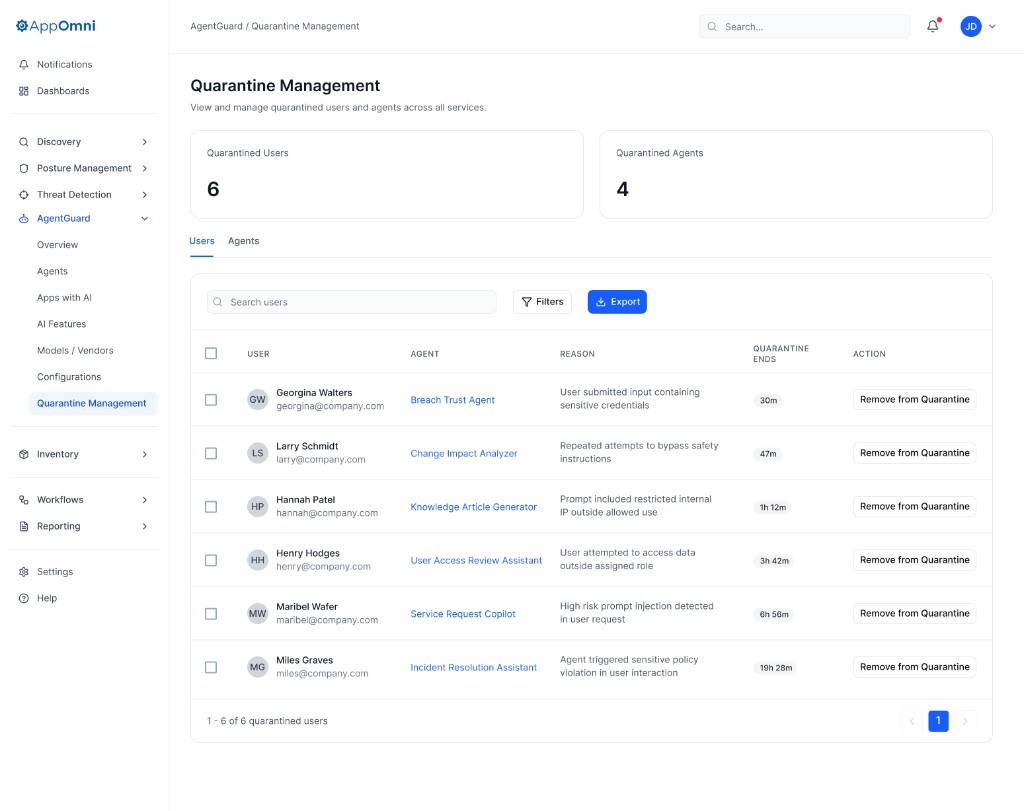

- Designed real-time prompt blocking and quarantine flows for security teams

Outcomes

- 30+ enterprise customers requested access before launch. Demand exceeded pilot capacity

- ~25 pilot customers onboarded, monitoring an average of 200+ AI agents per organization

- One pilot customer surfaced 4,000+ active agents that were previously invisible to their security team

- Replaced multi-week manual AI audits with continuous, real-time inventory across 15+ SaaS integrations at launch (ServiceNow, Microsoft Copilot, Zendesk, IBM Watson X, Akamai, Cisco, OpenAI, Anthropic, MuleSoft, Salesforce, and more)

My Role

Role

Lead Product Designer

Tools

Figma, Cursor, Figma Make, FigJam, and Jira

Timeline

2 months

I owned:

- Defining the four-layer AI inventory model and information architecture

- Designing the discover → configure → enforce workflow end-to-end

- Risk signal design for privilege, data access, and external model exposure

- Policy creation, prompt blocking, and quarantine flows

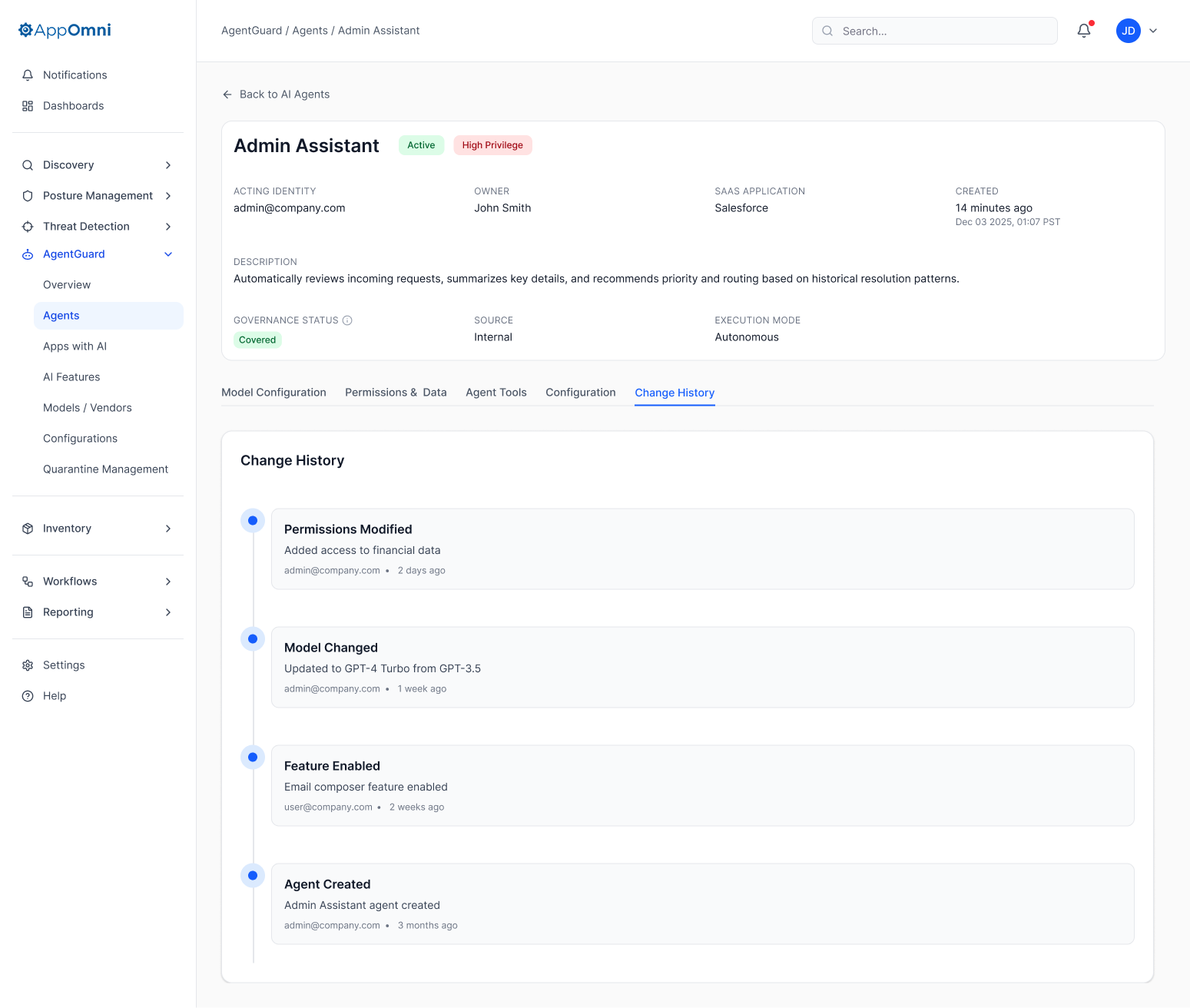

- Audit and change history experiences

- Partnering with engineering and security research to model AI risk across SaaS platforms

Context & Problem

AI was landing in Salesforce, Slack, Notion, GitHub, and more as embedded copilots, agents, and external model hooks, faster than most security teams could track. The common response was a manual one: spreadsheets, point-in-time audits, and tribal knowledge. These audits could take weeks and were outdated the moment they were finished, because users across the organization were continuously creating new agents on SaaS platforms.

Security teams lacked visibility into:

- Which SaaS apps had AI enabled

- What AI features were active

- Which AI agents existed

- What data those agents could access

- Which external models were connected

This created a new attack surface that no existing tool was built to govern. In one case, a major enterprise customer made AI visibility a condition of renewing their SaaS security contract, a clear signal that the market had reached an inflection point.

What security teams needed wasn't just an inventory. It was a workflow with three jobs to be done: discover the full AI surface area across applications, features, agents, and models; configure policy that could be enforced in product, not stored in a spreadsheet; and enforce in real time with signal-rich monitoring, quarantine, and prompt blocking. Those three jobs became the spine of AgentGuard.

Research & Process

The work started as a cross-functional effort with engineering and product. We had a strong hypothesis that AI governance was a workflow problem rather than a reporting problem, but we needed to ground that hypothesis in real customer environments before designing anything.

We interviewed seven enterprise customers who had already raised AI visibility as an unmet need. Rather than asking what they wanted us to build, we asked what would have to be true for the product to be viable in their environment: which integrations had to be there at launch, what enforcement actions had to be available, and what evidence they needed to defend a policy decision in an audit. Those conversations defined the bar for the MVP.

From the interviews, the product manager, lead engineer, and I built a user journey map together, capturing how a security team moves from suspecting they have an AI footprint, to discovering it, to writing their first policy, to handling their first incident. Mapping the journey jointly with engineering and product surfaced constraints early: which signals were realistically available from each SaaS integration, which enforcement actions were technically feasible at launch, and where the design had to bend around platform reality.

The AppOmni Admin

AgentGuard

User Description

A security or IT administrator responsible for protecting SaaS applications from AI-related risk. This user configures, monitors, and enforces AI security controls across AI agents (e.g., ServiceNow Agents, Microsoft Copilot, Salesforce Agentforce) to prevent prompt abuse, sensitive data leakage, and unsafe agent behavior.

Goals

The AppOmni Admin wants to securely enable AI agents across the organization while maintaining control, visibility, and consistency. Their goal is to prevent data leakage and AI misuse without disrupting legitimate work, and to quickly understand and act on risk when issues arise.

Pain Points

The AgentGuard Admin often lacks clear visibility into AI agent behavior and whether protections are actively enforcing or only monitoring. Platform limitations, high-impact configuration decisions, and fragmented investigation workflows make it harder to assess risk and respond quickly with confidence.

Typical Job Titles

Security Engineer, Cloud Security Engineer, System Owner, or Platform Owner

Activate AI security

Understand current AI agent coverage and risk

Define AgentGuard policies and enforcement modes

Review AI agent behavior and detections

Analyze violations, users, and agents at risk

Quarantine users or agents and apply controls

Tune thresholds, coverage, and enforcement over time

Share AI security posture and outcomes

I then created a user flow diagram to map every action a user might take inside the product: every entry point, every drill-down, every enforcement decision. That flow became the spec the MVP was built against.

From MVP to refined product

I built the initial MVP wireframes in Figma Make, working from the journey map and user flow as a structural guide. The first version covered just three pages (the Agent Inventory, Quarantine Management, and Configurations), the pieces every customer interview had pointed to as table-stakes. The remaining product surface emerged through refinement, not upfront design.

Refinements came from internal review with engineering and product, and from a second pass against the customer needs we'd captured. Three additions came out of that pass and reshaped the inventory model:

- AI Features page, added once it became clear that many SaaS apps ship multiple AI capabilities (summarization, embedded copilots, workflow automation) that aren't quite agents but carry similar risks. Without a Features layer, those capabilities had nowhere to live in the inventory.

- Apps with AI page, added so security teams could answer the first question they always ask ("which of our apps even have AI turned on?") without drilling through agents to figure it out.

- Models / Vendors page, added because external model access is one of the clearest exfiltration paths, and data governance teams needed the same line of sight on model providers that security has on conventional SaaS apps.

- Dashboard, added as the roll-up layer once the four inventory views existed, giving leadership and the SOC a day-one answer to "what is running right now?" before they open any individual page.

Each addition was a response to a specific gap, not a feature for its own sake, and each one earned its place by mapping back to a question the customer interviews had already raised. By the time the MVP was refined into the shipping product, the four-layer inventory model (Apps → Features → Agents → Models) wasn't an a priori framework. It was what survived contact with real customer needs.

Solution Overview

AgentGuard grounds governance in a four-layer view of the AI surface: Apps with AI, AI features, agents, and models or vendors. That inventory is the evidence layer that policies, enforcement, and audit build on top of, not a substitute for them.

The diagram below is the conceptual system map: the Discover / Configure / Enforce workflow on top, with the four inventory layers underneath.

STAGE 1: DISCOVER

Apps with AI

The first layer of the inventory surfaces which SaaS applications have AI enabled. Security teams can see at a glance which apps use AI, then drill in for configuration, roll-up metrics, and risk at the app level.

AI Features

Many SaaS applications ship multiple AI capabilities at once: assistants, summarization, workflow automation, and embedded copilots. A dedicated features inventory makes those capabilities first-class, including model and external connection signals per feature.

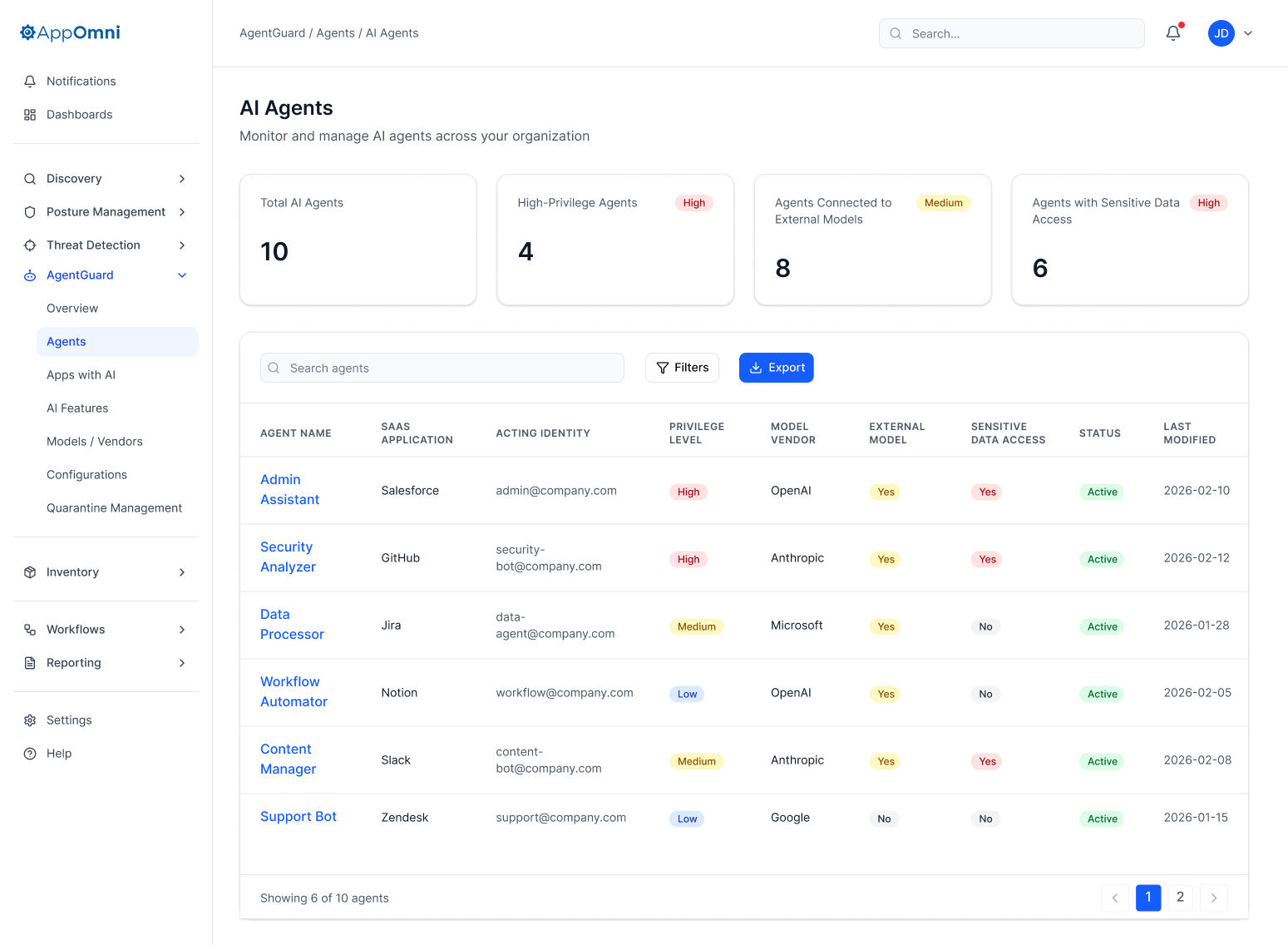

AI Agents

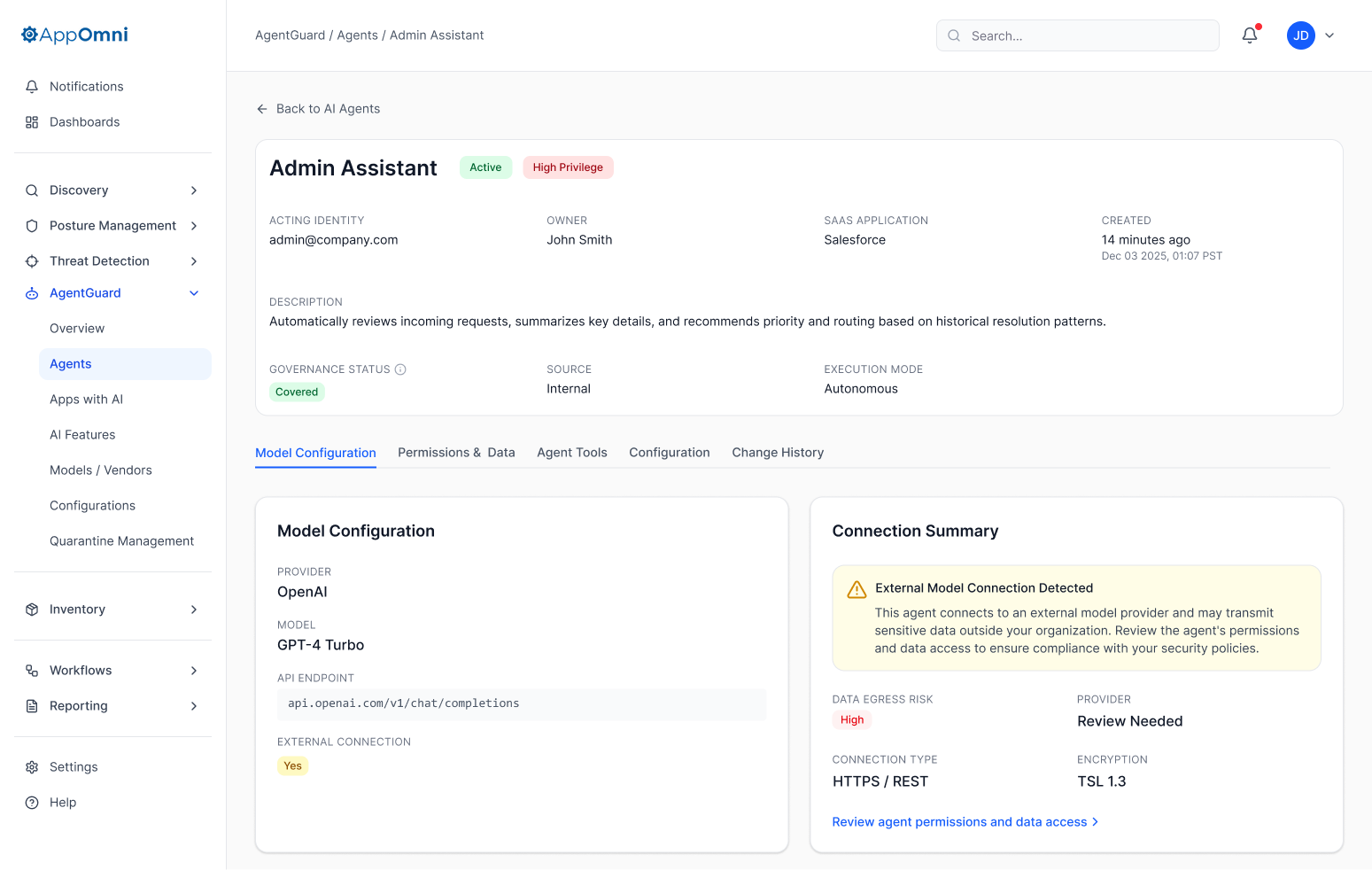

Agents are where autonomy meets risk. The agent inventory is built to make privilege, external model usage, and sensitive data access legible in one place, so high-risk agents are obvious before a policy is ever written against them.

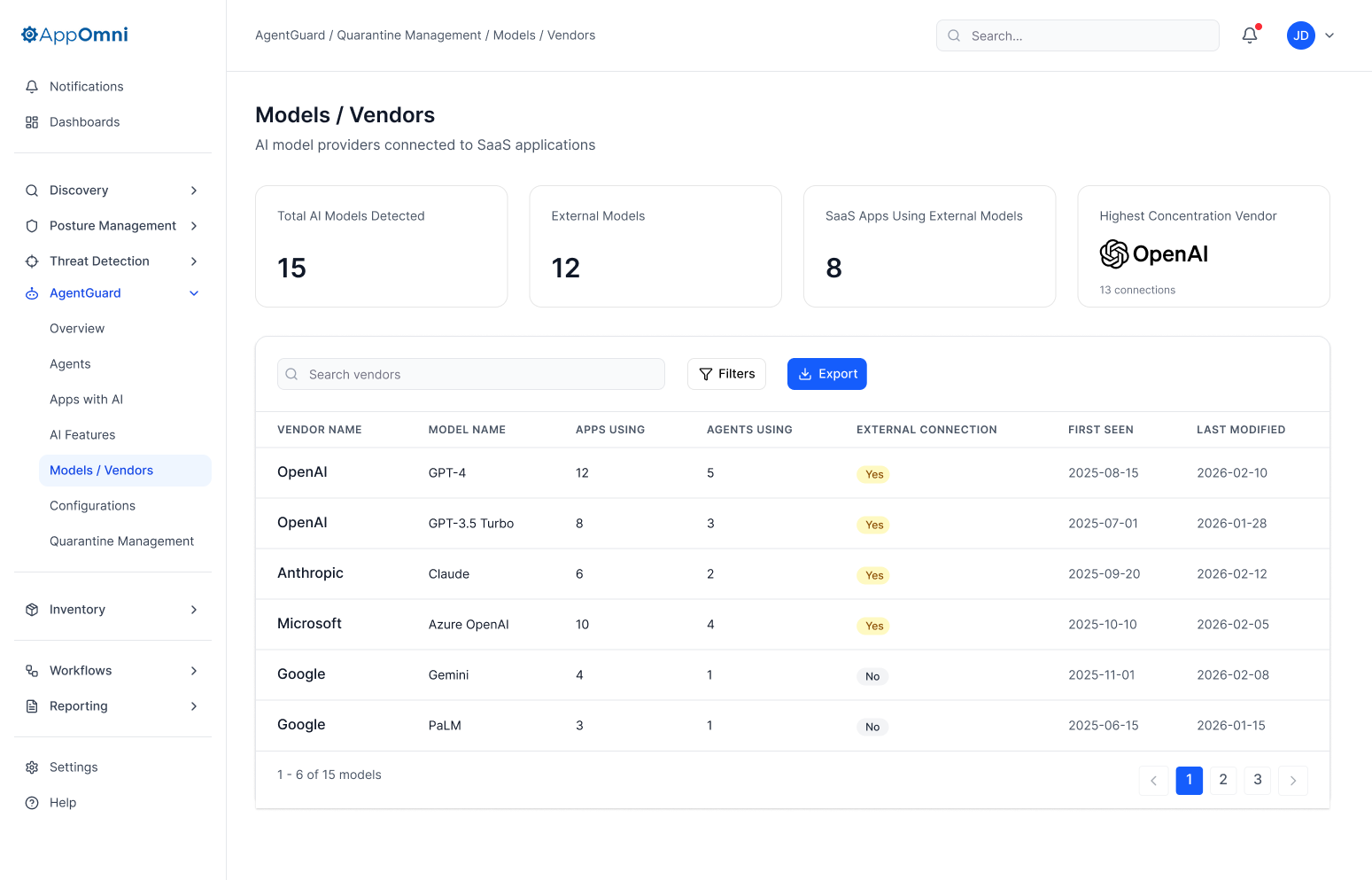

Models & Vendors

External model access is one of the clearest exfiltration paths for AI. The models and vendors view ties provider and model family back to where they show up in the environment, so data governance has the same line of sight that security has for conventional SaaS apps.

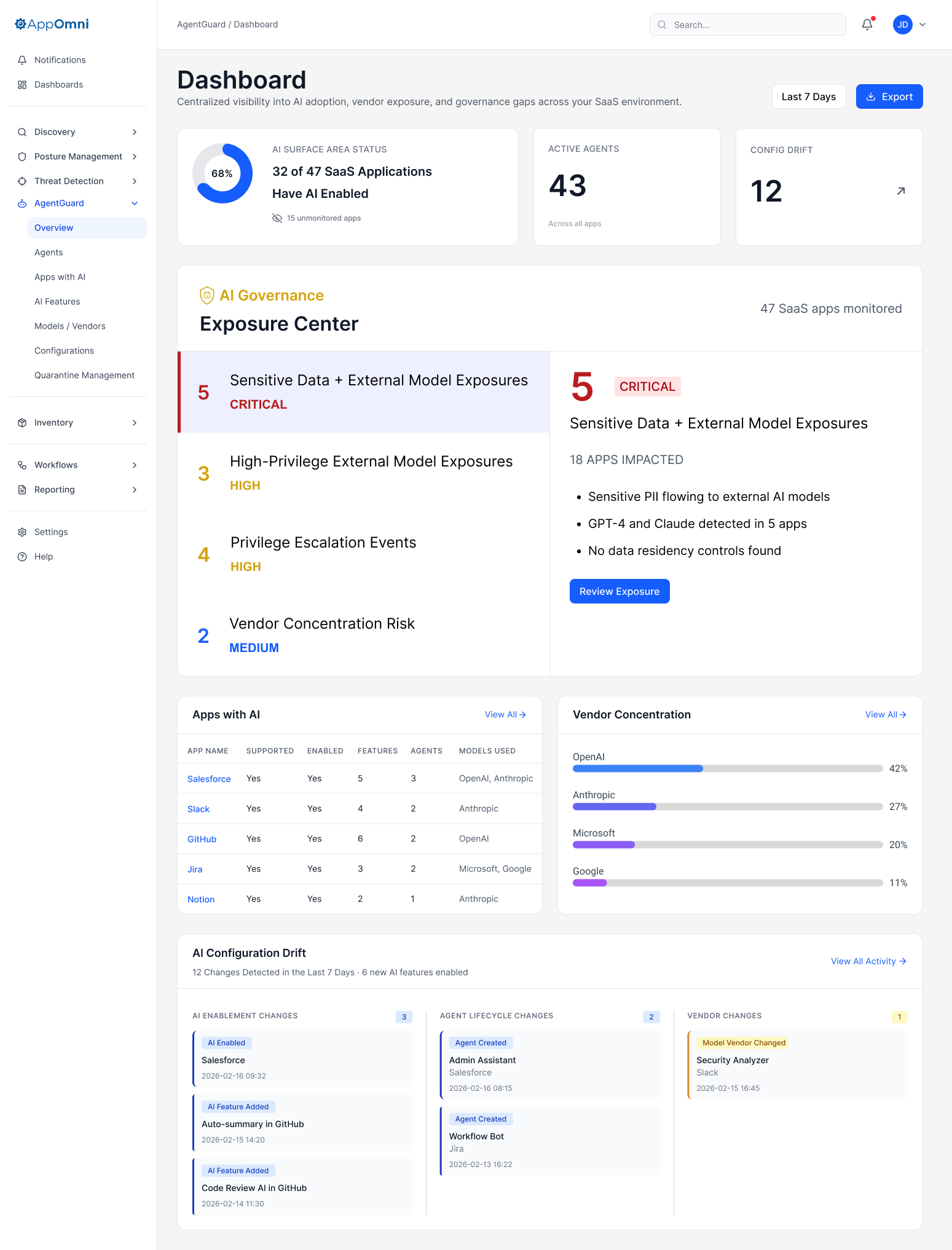

Dashboard

The dashboard is the roll-up: AI surface, exposure, vendor concentration, apps with AI, and drift. It is the day-one answer to “what is running right now?” for leadership and the SOC, before anyone opens an agent or policy detail.

STAGE 2: CONFIGURE

From discovery to policy

Discovery without action is just a longer spreadsheet. The configuration layer is where security teams codify what is acceptable: which agents can run, which models can be connected, which data classes are off-limits, and which prompts must never be allowed. Policies apply as new agents appear. The inventory and policy layers stay in sync by design, not on a project plan.

Configuration policy

Policy authoring needs to be reviewable, versionable, and legible to both security and app owners. The experience below (placeholder) shows the flow for creating and managing a configuration policy end to end.

Creating and deploying a configuration policy for AgentGuard end-to-end.

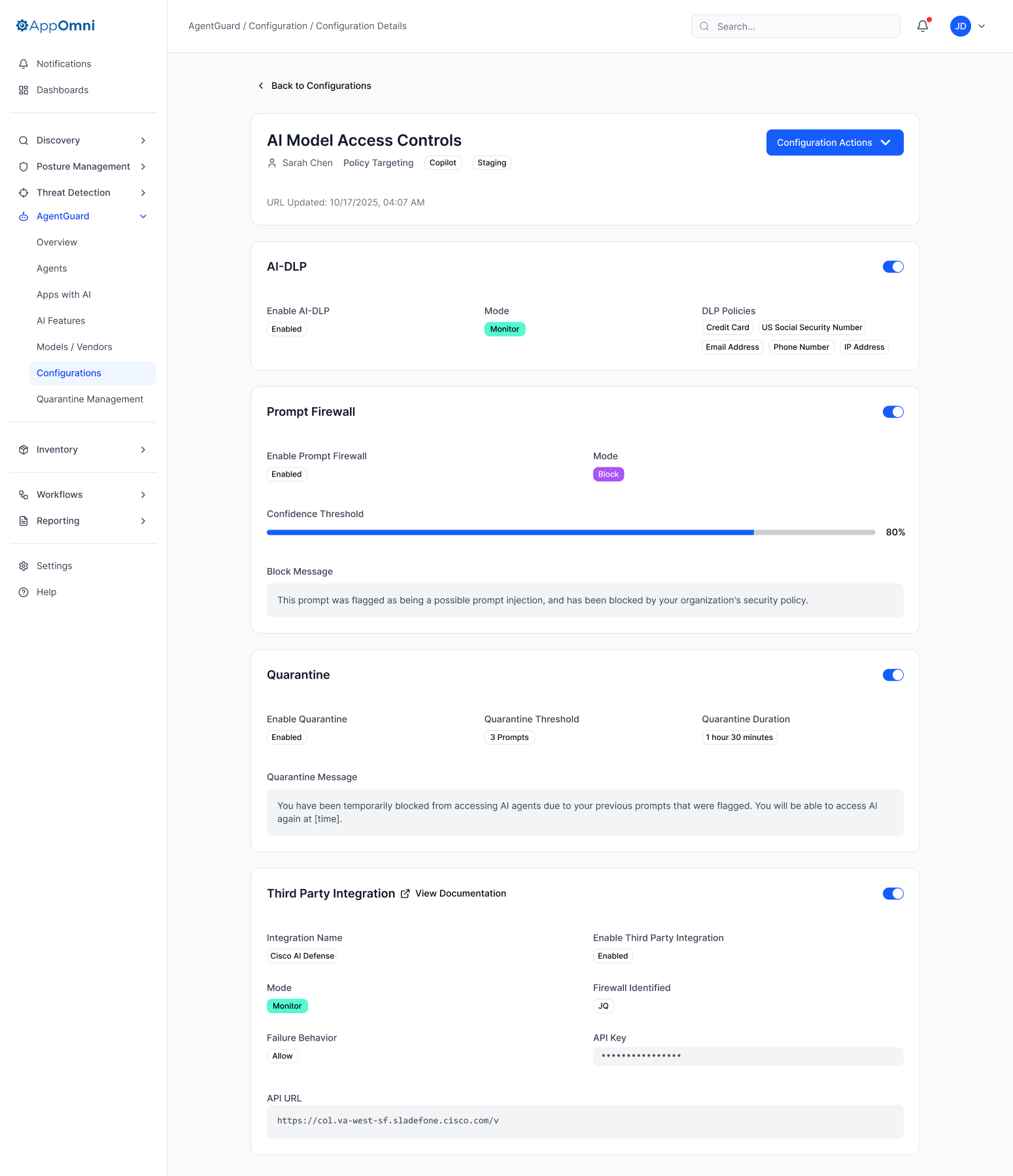

Configuration details

Configuration details tie a single policy's scope, rules, and enforcement behavior to the agents, features, and models it applies to. That is the bridge between a global policy library and a decision a security team can stand behind in an audit.

STAGE 3: ENFORCE

Real-time prompt blocking

Enforcement is not an afterthought. The same policies that are informed by the inventory can block a malicious or disallowed prompt at interaction time, with clear feedback in the product where the end user and security team can both see what happened and why.

Audit trail

When a policy fires (whether it blocks a prompt, flags an agent, or triggers a quarantine), the audit trail has to make the decision reviewable months later. Agent Details consolidates everything an investigator needs in one place: model configuration, connection summary, permissions, and data access. Agent Change History is the timeline view: every configuration change, every policy match, every enforcement event, in order. Together they answer the two questions that come up in every audit: what was the agent allowed to do, and what actually happened?

Quarantine

Quarantine is the controlled response when a user or agent is too risky to leave connected to sensitive data or external models. The flow is designed to be reversible, explicitly logged, and legible in audit: security can isolate, restore, and explain what changed without a one-off support escalation.

What I'd Do Differently

Two months is fast for a product of this scope, and a few decisions are worth revisiting now that pilots are running.

- Risk signals before risk scores. We resisted a single "risk score" per agent because the research said teams wanted the underlying signals. That was the right call, but pilot feedback shows some teams also want a roll-up score for prioritization at scale, especially when an inventory surfaces 4,000 agents. A composite score, with the signals still legible underneath, is on the roadmap.

- Policy templates for day one. Customers love the policy authoring flow once they're in it, but the blank-canvas problem is real. Several pilot teams asked, "what should our first policy even look like?" A library of starter templates (by industry, by SaaS app, by risk class) would shorten time-to-first-policy significantly. We shipped without it and felt the absence.

- Salesforce sooner. Nearly every pilot customer named Salesforce as their most urgent integration. We knew this going in but couldn't bring it forward in scope. In hindsight, swapping one of the launch integrations for early Salesforce coverage would have been worth the trade. It's now in active development.

- More iteration on the dashboard. The dashboard shipped functional but was the section with the least design exploration relative to its visibility. It's the screen leadership opens first, and it deserves another round of work to sharpen the hierarchy and the "what changed since last week?" story.

Impact

AgentGuard validated a thesis the team had been arguing internally for months: that AI governance is a workflow problem, not a reporting problem. Pilot customers confirmed it loudly. Teams that had previously settled for visibility-only tools described AgentGuard as the first product that let them act on what they found, and the demand curve before launch reflected that. More than 30 enterprise customers requested access in a window where competitors were still shipping inventory dashboards.

The discovery scale also reset internal expectations. When a single customer surfaces 4,000+ agents that were invisible the day before, every assumption about "how big this problem is" gets revised upward. That number reshaped the AppOmni roadmap and made AI governance a tier-one product line rather than a feature.

Just as importantly, the four-layer inventory model held up. It emerged from iteration (agents and policy first, then features, apps, models, and dashboard added as gaps surfaced), and once it was in place, it survived contact with customer environments more complex than anything we'd seen during research. It's now the foundation other AppOmni features attach to. Getting the IA right early paid for itself many times over.

AgentGuard shipped at the moment the market needed it, with the architecture to grow into what it needs to become. That's the part of the work I'm proudest of.